Most data scientists don't need to become LLM engineers, but the data scientist who understands how retrieval works, can evaluate whether an LLM output is trustworthy, and knows when to push back on the need for fine-tuning will be prepared to be involved in discussions around LLMs in their organization. This guide walks through what actually matters.

What level of LLM knowledge is enough for data scientists?

The honest answer is that it depends on your team's size, structure, and what they’re building with LLMs, but there's a baseline that applies almost universally regardless of role.

Most data scientists should understand how a RAG system works. Not necessarily how to build one from scratch, but enough to have a real conversation when something isn’t working right. This helps you understand where it breaks, how to test it, and work with the developers or MLE’s that might be doing the building. LLMs require more collaboration than machine learning projects on tabular data. You’ll have a better understanding of how the system could be improved and where to look.

Beyond that baseline, the scope of LLM knowledge needed scales with your role. On smaller teams, data scientists are owning more of the stack by necessity. On larger teams with dedicated ML engineering functions, the expectation shifts toward conceptual literacy over hands-on implementation. However, it is possible that in the right role, someone with a data scientist title could be building a RAG application or could be the person responsible for data cleaning, chunking, or evaluation.

What is RAG, and why is it a practical skill for beginners?

RAG stands for Retrieval Augmented Generation. The system is retrieving information from an internal knowledge base you set up (typically in a vector database). This information is sent to the LLM model with the system prompt (internal prompt instructions for tone, guardrails, etc.), and the user query. Finally, the LLM gives a response.

This helps address two common limitations of LLMs: First, the LLMs training data has a cutoff date, and second, the LLM has no knowledge of your organization's private data -- internal policies, product documentation, research, customer records. RAG gives the model access to that information without retraining it or fine-tuning. You update the knowledge base, not the model.

Learning RAG is a practical skill for data scientists because it is often the first and most practical approach when a company wants to build an LLM application that gives insight into their internal data. When leadership asks "can we get an AI to answer questions about our policies, our customer records, our research?" That is a RAG problem.

Understanding how the pieces fit together means you can contribute to scoping, testing, and debugging that work even if you are not the one writing the production code. Even watching a simple demo that brings you through creating embeddings, chunking, setting up a vector database, working with a prompt template, and creating a simple gradio app interface for querying the bot will give you a picture of a RAG system and how to work with it.

Agents vs RAG: what should data scientists learn first?

RAG first. Here's why.

Most agents use retrieval as one of their core tools. If you don't understand how retrieval works, you're treating it as a black box inside a system you're also trying to understand. This makes agents both harder to learn and, without understanding RAG, nearly impossible to debug when something goes wrong.

RAG also teaches the foundational concepts that agent architecture builds on. Chunking and context management are really just "how do you get the right information with a limited window," a problem that agents often face. Agents feel like a natural extension after learning RAG rather than an entirely new paradigm.

The learning curve difference is also significant. A data scientist can build a working RAG system prototype in an afternoon and understand what it does. Agents involve orchestration logic, tool definitions, state management, multi-step failure modes, and unpredictable LLM behavior in addition. The surface area is much larger and the feedback loop is slower. Starting there without a foundation tends to produce frustration more than learning.

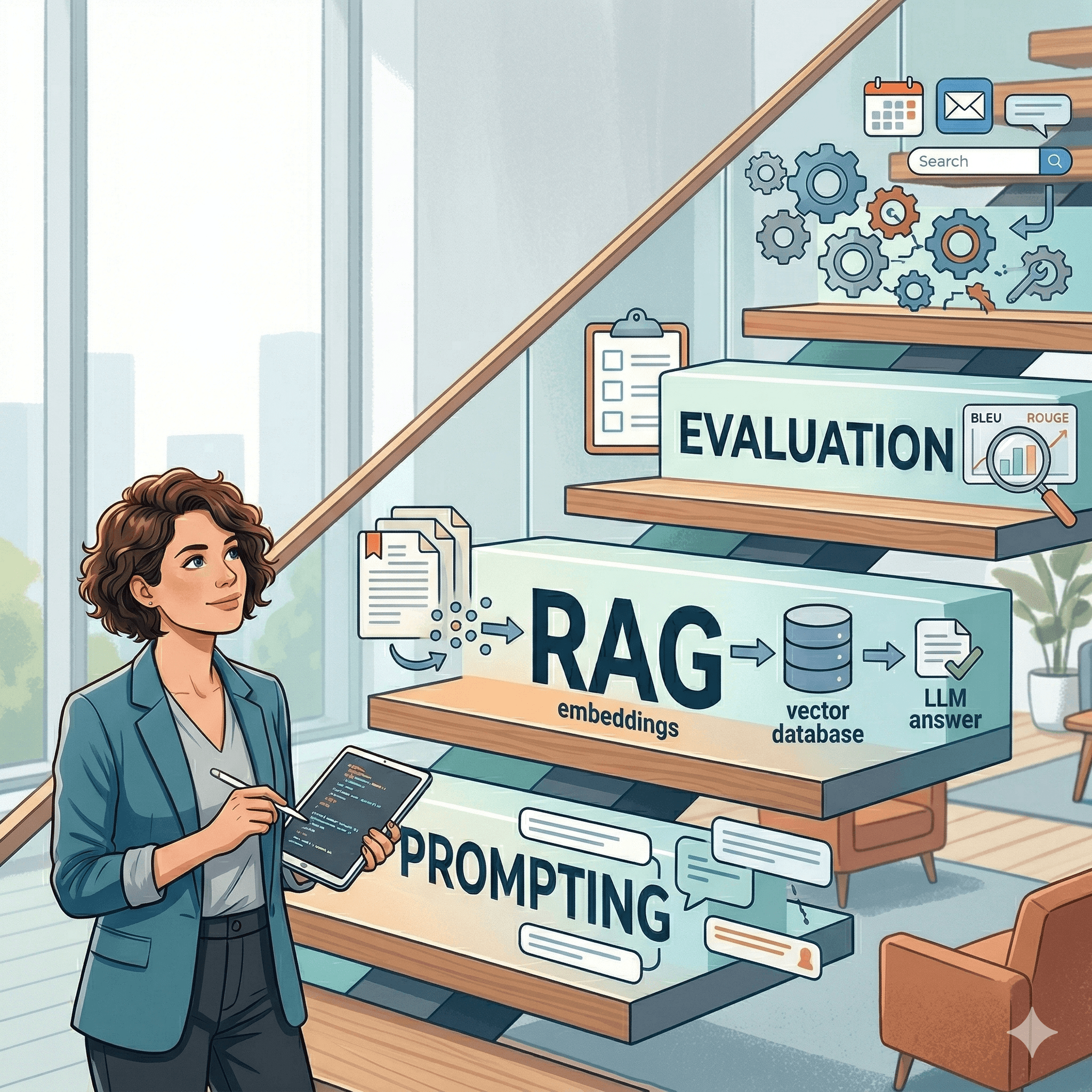

A sequencing that works in practice: learn prompt engineering → RAG → tool use → single agents → multi-agent systems. Each step builds on the last.

What does a GenAI skills roadmap for data scientists look like?

Here we go from “learning how the model works” all the way through CI/CD. It’s likely you don’t need to go all the way through multi-agent orchestration or deploying agents to production, but understanding the concepts in Layers 1-4 would give you a valuable foundation.

Layer 1: Foundations

Start with how LLMs actually work, not at a research level, but enough to set realistic expectations. What is a context window (The maximum amount of tokens the model can use at one time) and why does it constrain your design choices? Why do LLMs hallucinate? What is tokenization and how does it affect cost and latency? This layer is about building the right mental model before you touch any tooling.

Layer 2: Prompt engineering

This is where most practical LLM work begins. System prompts, structured prompt techniques (e.g. few-shot examples, decomposition, output constraints). You will use these skills in every layer that follows; they are a foundation you keep building on.

Layer 3: RAG

Learn about embeddings (and embeddings models), vector similarity search, chunking strategies, and retrieval evaluation. This is where you go from "I can prompt a model" to "I can build something useful with private data."

Layer 4: Evaluation

Before moving to agents or more complex systems, learn how to measure whether an LLM system is working. LLM-as-judge, human review workflows, building test sets, tracking regressions. This is one of the most important parts and is often skipped in simple demos.

Layer 5: Agents and tool use

Learn single-agent patterns first. Giving a model access to tools, handling multi-step reasoning, and managing state. Then multi-agent orchestration once single-agent patterns are solid. This is where complexity compounds quickly.

Layer 6: Production and LLMOps

Monitoring, versioning, CI/CD for LLM systems, cost management, handling model updates. This layer is often owned by ML engineers, but data scientists benefit from understanding it, especially the monitoring piece.

Which LLM projects should data scientists build first?

The easiest place to start is going to be a document Q&A with RAG project. If you’re currently an employed data scientist, use a very narrowly defined subset of internal docs. This might be using the maternity leave policy, the vacation and other leave policies or some other specific docs.

The point is that it is documentation that exists and is narrow in scope. Bonus points if the information is something that other employees access often for information. Are there sales agents who answer specific questions to customers who call in? Ideally, something that is not updated frequently is a great first starting project, so that you’re not building a setup where new information needs to constantly be turned into embeddings and added to the database to keep the information fresh.

If you’re not employed and looking to add a project to your portfolio, we suggest you mimic the above!

Ask your favorite LLM to give you the data a sales agent would use to answer customer questions and tell the LLM to structure this the same way you’d expect a sales agent to access it. Include product information, FAQs, shipping and fulfillment policy, returns and warranty policy, related product, and customer service escalation guides. Get this information for a small subset of hypothetical products and you’re ready to start. If you’re currently interviewing at a company with physical or digital products, make synthetic data around something that mimics products that would be in their catalog and consider adding brand elements to the dashboard.

Get your app up and running, use an LLM to help you write a fantastic README, make sure to add your biggest learnings and insights, and reference this project in future interviews or use it to get buy-in at your current org to work on a bigger project and take on more scope.

How can data scientists stay competitive without going too deep into GenAI?

This is one of the more honest questions in the field right now, and it deserves a direct answer: most data scientists do not need to go deep into GenAI to remain competitive. What they need is enough literacy to work alongside it, contribute to decisions about it, and recognize when it is and isn't the right tool.

You do not need to build every system to understand it well enough to evaluate it. Understanding how RAG works, what agents can and cannot reliably do, and where LLM systems typically fail gives you enough to ask the right questions, spot the red flags, and contribute meaningfully to projects being built at your organization. That is a different bar than being the person who builds them, and for many data scientist roles, it is the right bar.

In Summary:

Data scientists don’t need to become LLM engineers, but they will benefit form understanding enough to evaluate, question, and collaborate on LLM systems effectively.

RAG is often the most practical starting point, with prompt engineering and evaluation forming the core foundation before moving into agents.

The goal isn’t to build everything, but to develop the judgment to know what to use, how to test it, and where it can fail.

Up to 50% Off Maven Pro Plans

Spring Savings Sale

Take advantage of this limited-time offer and save up to 50% off unlimited Maven access!

Kristen Kehrer

Data Science & AI Expert

I love building coding demos and educating others around topics in AI and machine learning. This past year I've leveraged computer vision to build things like a school bus detector that I use during the school year to get my kids on the bus. I've most recently been playing with semantic video search, vector databases, and building simple chatbots using OpenAI and LangChain.

Frequently Asked Questions

Should data scientists learn LLMs?

Yes. LLMs are quickly becoming a core tool for working with unstructured data, automation, and building intelligent applications. Data scientists who understand them will have an advantage in designing, evaluating, and improving modern AI systems.

Do data scientists need to learn RAG?

RAG is the most practical LLM skill for data scientists because it comes up frequently when an organization wants AI to answer questions about their internal data. You don't necessarily need to be able to build it, but understanding how retrieval works means you can contribute to scoping, testing, and debugging even when an MLE or developer is doing the build.

Should beginners learn RAG before agents?

Yes -- RAG first, agents second. Most agents use retrieval as a core tool, so learning agents without understanding RAG means treating a critical piece of the system as a black box.

What’s the difference between agents and RAG?

RAG solves a knowledge problem, it gives a model access to information it wasn't trained on. Agents extend LLMs to take multi-step actions using tools and memory, they can reason, make decisions, and take actions across multiple steps.

Do data scientists need to fine-tune LLMs?

Most don't, and many organizations don’t fine-tune either. They’re often accessing an LLM through an API. RAG and prompt engineering solve many practical business problems without the cost and complexity of fine-tuning, so those are worth mastering first. Fine-tuning becomes a consideration for very high-volume, latency-sensitive, and well-defined tasks.