If you’ve spent any time around data or AI lately, you’ve probably heard some version of the same question: "Are large language models (LLMs) replacing machine learning (ML)?"

It’s an understandable concern. LLMs feel incredibly powerful, they’re showing up everywhere, and they’ve already reshaped parts of the data workflow. But the reality is more nuanced, and a lot more useful for your career.

The goal isn’t to pick sides between “ML” and “LLMs.” It’s to understand what each does well, where they actually overlap, and how to use both effectively in a world where data work is evolving fast.

In this article, we’ll walk through some of the most common questions around how LLMs and traditional machine learning differ, where each one shines, and how to decide which approach actually fits the problem you’re trying to solve. Let's dig in:

Will LLMs replace traditional machine learning?

No, LLMs will not replace traditional ML, because they solve for different use cases.

It’s similar to when deep learning because popular and data scientists started throwing deep learning at problems that didn’t require it. Part of your job is to understand the data structure, the problem that you’re solving, and apply the right techniques.

LLMs are built for unstructured data: language, reasoning, generation, and text understanding. Traditional machine learning is built for structured, tabular data: numerical predictions, classification, forecasting, anomaly detection. These are not the same problem, which means they're not really competing.

Although the focus of the business may change and there may be more opportunities for building LLM systems, there will still be a need for companies to get insight into traditional use cases like customer retention, customer lifetime value, and demand forecasting, these use cases all best suited for traditional machine learning.

Traditional NLP pipelines, things like intent classification, named entity recognition, sentiment analysis, and text categorization, were often complex, brittle, and expensive to maintain.

LLMs have genuinely displaced a lot of that work, particularly in cases where labeled training data was scarce or the language was too varied for a rules-based or classical approach to handle well.

LLMs are absorbing part of the experimental and preprocessing layers of ML workflows, while the core production modeling layer remains ML's territory for structured problems. But the replacement narrative misses the point a bit. These tools are better understood as colleagues than competitors.

What is the difference between traditional machine learning and LLMs?

Traditional machine learning models are trained for a specific, narrowly defined task (predict this outcome, classify this input, forecast this number) using structured data with defined features that were determined to be relevant to the problem you’re solving. The model is optimized for the single task that is being optimized.

LLMs work differently. They're trained on large amounts of text data and learn to understand and generate language across a wide range of tasks.

What kinds of problems are LLMs best at solving?

LLMs excel when the input is language and the output requires understanding, generating, or reasoning over text.

Summarization is an obvious use case: taking a long document and distilling it into what matters. Question answering lets users query a knowledge base in plain language rather than learning a query syntax. Document extraction pulls structured information out of unstructured sources like contracts, medical notes, or support tickets.

Chatbot and assistant workflows use LLMs to handle open-ended conversations that would be impossible to script in advance. Text generation produces drafts, reports, and communications at scale. Semantic search goes beyond keyword matching to find content based on meaning rather than exact terms.

And natural language classification or routing — deciding where a customer inquiry should go based on what they actually said — becomes dramatically simpler when a model understands language rather than just pattern-matching on words.

The reason LLMs feel so broadly capable right now is that language touches almost every business process. Wherever a human was previously required to read, interpret, or write something, there's now a question worth asking about whether an LLM can do it faster.

When is traditional machine learning still the better choice?

Traditional machine learning isn't going anywhere for the problems it was built to solve.

The bread and butter of enterprise data science, demand forecasting, customer analytics like churn and lifetime value, fraud and risk modeling, pricing and optimization, and recommendation systems, have not moved to LLMs, and there's no reason to expect they will. These are structured prediction problems.

Where the landscape has genuinely shifted is in a narrower band of NLP work that used to require significant effort: sentiment analysis, intent detection, named entity recognition, document classification, and information extraction. These problems were always being solved with traditional ML approaches, but LLMs and transformer-based models now do them better with less friction.

And then there's a third category that LLMs didn't take from traditional ML, because traditional ML was never doing them in the first place: summarization, open-ended Q&A over documents, generative content, conversational interfaces. LLMs created new capabilities here, not displacement.

Why hasn’t traditional ML become obsolete?

Traditional ML hasn’t become obsolete because the problems it was built to solve haven’t become obsolete, and many core business problems are not language problems.

In principle, an LLM can solve a churn prediction task, but it is not the best tool for the core prediction model and is most often over-engineered due to the added complexity. Traditional ML models like gradient‑boosted trees remain simpler, cheaper, and more standard for production churn scoring, and traditional ML still outperforms LLM‑based approaches on typical tabular benchmarks. One exploration of using LLMs for traditional ML‑style use cases is this survey on LLMs for tabular data.

In practice, LLMs are most useful alongside the churn model, generating synthetic feedback, translating SHAP values into narratives, or proposing recommendations and interventions, rather than replacing the supervised ML model that estimates churn probability.

For these use cases, tree‑based models plug cleanly into existing MLOps and validation workflows (backtesting, monitoring, model risk review), so they are much easier to validate and deploy than LLM‑based systems, and they perform better that trying to use an LLM.

How should teams decide when to use ML vs LLM?

When choosing an approach, teams can use this ML vs LLM Decision Framework:

Layer 1: The Real Question That Matters First

Is the core problem fundamentally a language problem?

By "language problem" we mean: does solving it require understanding, generating, or reasoning over natural language as the primary input or output as the substance of the task?

Sentiment analysis on free-text reviews → yes

Churn prediction from behavioral features → no

Summarizing call transcripts → yes

Fraud detection on transaction data → no

Extracting structured fields from unstructured contracts → yes

Demand forecasting → no

If the answer is no, you're done. Use ML. Full stop. No further criteria needed.

If the answer is yes, move to Layer 2.

Layer 2: Is There a Well-Defined, Measurable Output?

This is where you separate problems that look like language problems but are actually structured prediction problems in disguise.

Ask: Can you define what "correct" looks like with a label, schema, or metric you can optimize against?

Sentiment analysis — the output is actually well-defined (positive/negative/neutral or a score), so Layer 2 correctly routes this away from generative LLM and toward traditional sentiment analysis techniques (non LLM)

Summarizing call transcripts — output is open-ended by definition, so it passes Layer 2 and moves to Layer 3

Extracting structured fields from contracts — this is the interesting one, because it has a defined schema but the reasoning required to find and interpret the fields is genuinely complex language understanding, which means we’ll let it move to layer 3 for further inspection.

Layer 3: Does the Task Require Reasoning Across Context?

If you've made it here, the input is language and the output isn't cleanly definable — or the reasoning required to produce even a structured output is genuinely complex. Now ask:

Does solving this require holding and reasoning across extended context, ambiguity, or open-ended instruction?

Summarizing call transcripts → yes — the output is open-ended by definition, there's no fixed schema to optimize against

Extracting structured fields from contracts → yes — the schema is defined, but finding and interpreting those fields requires reasoning through dense, ambiguous legal language that a rules-based or classification approach will break on

This is the layer where LLMs genuinely earn their place, because the problem structure actually requires what they're good at.

What Doesn't Actually Drive the LLM vs ML Decision (And Why)

The following factors mostly affect how you implement a system rather than whether you choose ML vs LLM.

Hallucination tolerance

This is a risk threshold, not a selection criterion. If the problem doesn't pass Layer 1, hallucination tolerance is simply never relevant. If it does pass, tolerance informs how you deploy and validate, not whether you choose an LLM.

Labeled data availability

Text data without labels is a labeling cost problem, and LLMs can sometimes help you solve that problem — by generating synthetic labels, by doing weak supervision, by bootstrapping an annotation pipeline. That's a legitimate use of LLMs. But it's very different from saying the absence of labels makes the downstream task an LLM problem if it’s not a fit.

Interpretability

The task and data type usually decide “ML vs LLM” before interpretability enters the picture. Churn, fraud, and credit scoring stay tabular‑ML problems even if the regulator relaxes interpretability; a free‑form drafting assistant stays an LLM use case even if interpretability demands are high. Explainability needs constrain how you implement whichever paradigm you choose. It's a deployment constraint, not a selection fork.

Speed and cost

Second-order operational constraints. They matter enormously at implementation time, but they don't tell you which approach fits the problem. Optimizing cost on the wrong tool is still the wrong tool.

Infrastructure and governance complexity

This is "does our current maturity level allow us to deploy and maintain this responsibly." That affects sequencing and rollout, not the fundamental fit assessment.

Can LLMs and traditional machine learning work together?

LLMs and traditional ML aren't competing approaches; they're complementary tools that can be sequenced together to solve problems neither handles as well alone.

A common pattern is using an LLM or transformer-based model to extract structured information from unstructured text — pulling key fields from contracts, categorizing customer feedback, identifying entities in clinical notes — and then feeding that structured output into a traditional ML model for downstream prediction. The language model does what it's good at, and the predictive model does what it's good at.

This kind of hybrid architecture is growing in production environments, particularly in industries like healthcare, financial services, and customer analytics, where both unstructured text and structured behavioral data exist in abundance.

What does machine learning still look like in 2026?

Machine learning hasn't gone anywhere; it's just no longer the loudest thing in the room.

In 2026, ML is less likely to be the centerpiece of an AI strategy conversation and more likely to be the right solutions to a number of use cases on a list that also includes multiple LLM-based use cases. Teams that spent years building ML intuition, understanding how to frame a modeling problem, evaluate outputs rigorously, and reason about tradeoffs, are better equipped to make good decisions about LLMs, too.

The fundamentals transfer: a practitioner who knows why a model fails, how to detect it, and what to do about it will navigate the current landscape more reliably than one chasing the newest capability.

What has changed is the context. Automation expectations have risen, the evaluation bar has gotten harder to meet, and the pressure to reach for the most powerful-sounding tool is real. ML holds its ground by being the right answer when the problem actually calls for it.

Kristen Kehrer

Data Science & AI Expert

I love building coding demos and educating others around topics in AI and machine learning. This past year I've leveraged computer vision to build things like a school bus detector that I use during the school year to get my kids on the bus. I've most recently been playing with semantic video search, vector databases, and building simple chatbots using OpenAI and LangChain.

Frequently Asked Questions

Will LLMs replace machine learning completely?

LLMs won’t replace ML any more than deep learning replaced statistics — the older paradigm quietly kept doing the jobs it was always good at. What changes over time is not which tool survives, but which problems each one gets assigned. ML remains the more reliable, auditable, and cost-efficient choice when the problem is structured and the output is defined. The more useful question for any team isn't which one wins, but which one fits.

What is the difference between traditional ML and LLMs?

Traditional supervised ML models learn patterns from structured data to make predictions against a defined output — a churn score, a fraud flag, a demand forecast. LLMs are trained on vast amounts of text to develop a generalized understanding of language, which they use to generate, summarize, classify, or reason over unstructured inputs. The practical difference comes down to problem structure.

Is machine learning still relevant in 2026?

Yes! ML didn't lose relevance. The problems ML has always been good at haven't gone away: structured prediction, classification, anomaly detection, and forecasting are still core to how most organizations actually operate. What's changed is that teams now evaluate ML alongside LLM use cases rather than treating tabular data as the only data they have to work with.

Are LLMs better than traditional ML?

Neither is better. They're optimized for different problem structures, which makes the comparison a bit like asking whether a scalpel is better than a saw. LLMs have a genuine advantage when the task requires language understanding, open-ended generation, or reasoning across ambiguous context; ML has a genuine advantage when the output is defined and the problem structure doesn't require language reasoning at all. The teams that get the most out of both are the ones who stopped asking which is better and started asking which one fits the problem in front of them.

Should data professionals still learn machine learning?

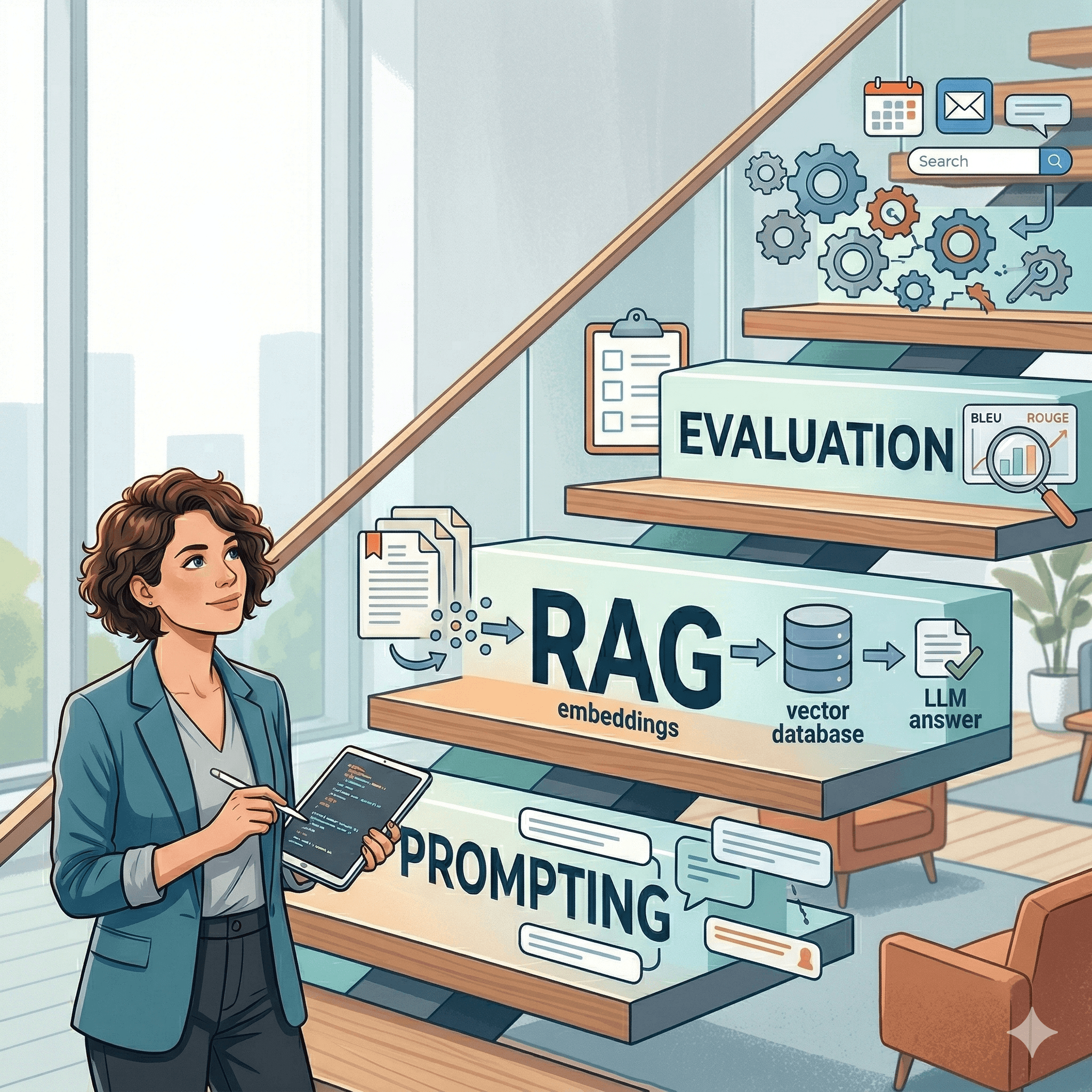

Yes. Learning ML still builds core skills in modeling, evaluation, and problem framing, all of which remain useful even as LLM adoption grows. It’s much easier to extend your traditional ML skills to working with similarity search and RAG, than it is to start working with LLM systems without any prior modeling experience.